There's been some chatter among meteorologists in recent days about unusually poor forecast performance by the U.S. Global Forecast System, a model that predicts the global circulation for weather forecasts out to 15 days. Summer is a more challenging time for weather forecasts in general, because relatively unpredictable small-scale features are more important than in winter. But even compared to normal for the time of year, the recent performance has been poor.

The pair of maps below shows a 7-day forecast of 500mb height anomaly (departure from normal) on the left and the ensuing verification on the right; the forecast was issued on May 14. While some of the features were correctly anticipated, the forecast was badly wrong from easternmost Siberia to Alaska and also in western Europe. The spatial correlation of anomalies ("anomaly correlation") was only 0.26.

Here's the same pair of maps but for the 7-day forecast from ECMWF. It's widely known than ECMWF's data assimilation and modeling methods produce generally superior weather forecasts compared to GFS, but in this case the difference was dramatic.

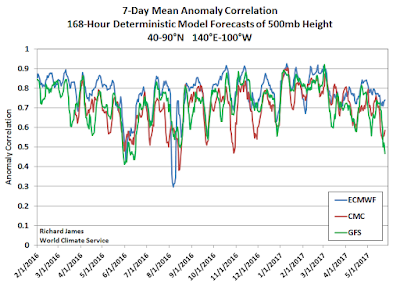

To put this event in context, I calculated the daily anomaly correlations over the Northern Hemisphere north of 20°N for 7-day forecasts back to early 2016, and I also looked at Environment Canada's global model forecasts (labeled as "CMC" in the charts below). The first chart shows the dramatic drop-off in skill in recent days for both GFS and CMC; both models have suffered the same fate, but ECMWF has remained relatively unscathed. Note, however, that ECMWF is not without fault - it had a remarkable failure last summer.

If we plot the differences between the models, we see that the ECMWF forecasts have outperformed more dramatically in recent days than at any other time in the past year or so.

Zooming in on the North Pacific region (see below), there is of course more noise in the statistics, but similar patterns are evident. The CMC model has not fared quite as badly as GFS in recent days, but again the ECMWF has performed much better than the other two models.

In conclusion - let's hope the current episode proves to be instructive for the model developers so that the science can continue to advance. NOAA scientists are paying attention to these forecast skill "dropouts" - see for example the article beginning on page 5 of the following newsletter:

https://www.jcsda.noaa.gov/documents/newsletters/2017_02JCSDAQuarterly.pdf

The bigger picture from the results I've shown here is that modern global circulation models now have the ability to predict weather patterns out to 7 days or more with fairly good skill on average, and I find that to be a remarkable achievement.

The language in the JCSDA piece is kind of dense for an amateur like me, but there's a couple of things I don't understand:

ReplyDelete1) The ECMWF clearly outperforms the GFS on a regular basis, so why don't they make the GFS more like the ECMWF? Are there data inputs or proprietary knowledge about the model that are unavailable to American forecast modelers?

2) Are American forecasters leaning more heavily on the ECMWF due to its effectiveness?

Good questions... the main issue is organizational, I think. The ECMWF has steadily moved ahead with innovation and technology, but the US has lagged. Cliff Mass writes about all of this - he has strong opinions, and I don't agree with him on everything, but you might find it interesting, e.g.

Deletehttp://cliffmass.blogspot.com/2016/10/us-operational-numerical-weather.html

As for (2), yes - anyone that can get their hands on it uses ECMWF heavily. Most of the forecast data is available only for a hefty price, but the NWS has access.

Just FYI…NWS Forecast Offices have access to operational ECMWF at 6 hour intervals, but with only a fraction of the kinds of info available from the US and Canadian models. Mostly standard level type info. Operational forecasters in field offices don't have access to, say, detailed ECMWF vertical profiles or integrated variables (say, CAPE). --Rick

DeleteThat's very interesting, Rick. News to me. Thanks.

DeleteDid read Cliff's notes. Interesting and not atypical of the upper layers of government versus private industry that's motivated by competition and profit.

ReplyDeleteThe local NWS in Alaska is very good to us...no complaints and they're always willing to interact and help.

Gary

Comparing the models like you've done here shows why forecasters usually look at multiple models and use intuitive judgement in fine-tuning a forecast. It also shows why single automated model forecasting (like many popular commercial products) are often so wrong.

ReplyDeleteI too find it amazing how accurate forecasting is getting at a week out. It seems to fall apart only under the most dynamic conditions. Even there better data and better resolution would fill in the gap.